nono provides two ways to keep credentials out of the sandboxed process:

- Proxy injection (

--credential) - The agent talks to a local reverse proxy that injects real API keys on the fly. The credential never enters the sandbox, not even as an environment variable. This is the recommended approach for LLM API keys.

- Environment variable injection (

--env-credential) - Loads secrets from the system keystore and injects them as environment variables before the sandbox is applied. Simpler, but the secret is visible in the process environment.

Proxy Injection (Recommended)

The proxy acts as a reverse proxy for configured credential routes. The agent sends plain HTTP to localhost:<port>/<service>/... and the proxy:

- Strips the service prefix

- Injects the real credential as an HTTP header

- Forwards to the upstream over TLS

- Streams the response back

Agent sends: POST http://127.0.0.1:PORT/openai/v1/chat/completions

Proxy sends: POST https://api.openai.com/v1/chat/completions

Authorization: Bearer sk-... (injected from keystore)

Quick Start

# 1. Store credentials in the system keystore

security add-generic-password -s "nono" -a "openai" -w "sk-..." # macOS

# 2. Run with credential injection

nono run --allow-cwd --network-profile claude-code --credential openai -- my-agent

OPENAI_BASE_URL=http://127.0.0.1:<port>/openai in the child’s environment. Most LLM SDKs respect this variable and redirect API calls through the proxy automatically.

Storing Credentials

Built-in credential routes load secrets from the source defined in network-policy.json. If a built-in route omits credential_key, the keyring username defaults to the service name under the keyring service nono.

| CLI Service | Credential Source |

|---|

openai | keyring service nono, username openai |

anthropic | host environment variable ANTHROPIC_API_KEY via env://ANTHROPIC_API_KEY |

gemini | keyring service nono, username gemini |

google-ai | keyring service nono, username google-ai |

github | host environment variable GITHUB_TOKEN via env://GITHUB_TOKEN |

gitlab | host environment variable GITLAB_TOKEN via env://GITLAB_TOKEN |

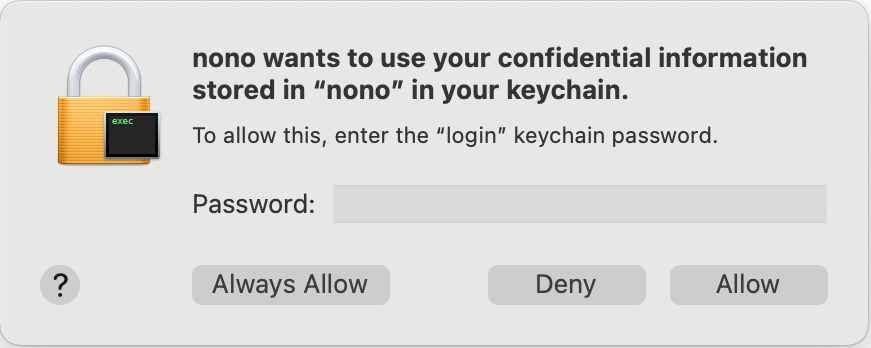

macOS

security add-generic-password -s "nono" -a "openai" -w "sk-..."

security add-generic-password -s "nono" -a "gemini" -w "your-gemini-key"

security add-generic-password -s "nono" -a "google-ai" -w "your-google-ai-key"

# Built-in Anthropic, GitHub, and GitLab routes read from host env vars.

export ANTHROPIC_API_KEY="sk-ant-..."

export GITHUB_TOKEN="ghp_..."

export GITLAB_TOKEN="glpat-..."

Linux

The keyring crate uses service, username, and target attributes. You must use these exact attribute names:

echo -n "sk-..." | secret-tool store --label="nono: openai" \

service nono username openai target default

echo -n "your-gemini-key" | secret-tool store --label="nono: gemini" \

service nono username gemini target default

echo -n "your-google-ai-key" | secret-tool store --label="nono: google-ai" \

service nono username google-ai target default

username (not account) as the attribute name. The target default attribute is required for the keyring crate to find the entry. Built-in Anthropic, GitHub, and GitLab routes read from host environment variables instead of the keyring.

1Password Integration

nono supports 1Password op:// URIs as a credential source anywhere you would use a keyring account name. For CLI env injection, use --env-credential-map <op://...> <ENV_VAR>. This also works in profile-based credentials (env_credentials, custom_credentials). The 1Password CLI (op) must be installed and authenticated.

Finding Your Secret Path

op:// URIs have the format op://<vault>/<item>/<field>. Use the op CLI to discover each segment:

# 1. List your vaults

op vault list

# 2. List items in a vault

op item list --vault Development

# 3. See all fields on an item

op item get "OpenAI API Key" --vault Development

# 4. The resulting URI

op://Development/OpenAI API Key/credential

password field, “API Credential” items typically have credential, and custom items use whatever field labels you set. Run op item get to see all available fields for a given item.

CLI: Direct Environment Variable Injection

Pass an op:// URI to --env-credential-map with an explicit destination env var:

# Single 1Password secret

nono run --allow . --env-credential-map 'op://Development/OpenAI/credential' OPENAI_API_KEY -- my-agent

# Multiple secrets (mixed keyring + 1Password)

nono run --allow . \

--env-credential openai_api_key \

--env-credential-map 'op://Development/Anthropic/api-key' ANTHROPIC_API_KEY \

-- my-agent

--env-credential 'op://vault/item/field=MY_VAR'.

Profile: Environment Credential Injection

In a profile’s env_credentials section, use an op:// URI as the key instead of a keyring account name:

{

"meta": { "name": "my-agent" },

"env_credentials": {

"op://Development/OpenAI/credential": "OPENAI_API_KEY"

}

}

nono run --profile my-agent -- my-agent

# Child process sees: OPENAI_API_KEY=sk-actual-secret-value

Profile: Proxy Credential Injection

For network API keys, proxy injection is recommended — the child process never sees the real secret. Use an op:// URI in credential_key:

{

"meta": { "name": "my-agent-secure" },

"network": {

"custom_credentials": {

"openai": {

"upstream": "https://api.openai.com/v1",

"credential_key": "op://Development/OpenAI API Key/credential",

"env_var": "OPENAI_API_KEY",

"inject_header": "Authorization",

"credential_format": "Bearer {}"

},

"anthropic": {

"upstream": "https://api.anthropic.com",

"credential_key": "op://Development/Anthropic/api-key",

"env_var": "ANTHROPIC_API_KEY",

"inject_header": "x-api-key",

"credential_format": "{}"

}

},

"credentials": ["openai", "anthropic"]

}

}

nono run --profile my-agent-secure -- my-agent

# Child process sees: OPENAI_API_KEY=nono_sess_a1b2c3... (phantom token)

# Proxy transparently swaps to real Bearer sk-... when forwarding to api.openai.com

Mixed Mode: Environment + Proxy Credentials

You can combine both injection modes in a single profile. Use env_credentials for non-network secrets (database passwords, tokens) and custom_credentials with proxy injection for API keys:

{

"meta": { "name": "mixed-example" },

"env_credentials": {

"op://Infrastructure/Database/password": "DATABASE_PASSWORD"

},

"network": {

"custom_credentials": {

"openai": {

"upstream": "https://api.openai.com/v1",

"credential_key": "op://Development/OpenAI/credential",

"env_var": "OPENAI_API_KEY",

"inject_header": "Authorization",

"credential_format": "Bearer {}"

}

},

"credentials": ["openai"]

}

}

nono run --profile mixed-example -- my-app

# Child process sees:

# - DATABASE_PASSWORD=... (from 1Password via env_credentials)

# - OPENAI_API_KEY=nono_sess_... (phantom token from credentials)

Apple Passwords Integration (macOS)

nono supports Apple Passwords entries via apple-password:// URIs in both --env-credential-map and profile credential fields (env_credentials, custom_credentials.credential_key).

URI format:

apple-password://<server>/<account>

server: website/service hostname (for example, github.com)account: account/username for that entry (for example, alice@example.com)

nono resolves this URI using macOS security find-internet-password -s <server> -a <account> -w.

CLI: Direct Environment Variable Injection

# Single Apple Passwords secret

nono run --allow . --env-credential-map 'apple-password://github.com/alice@example.com' GITHUB_PASSWORD -- my-agent

# Mixed with keyring + 1Password

nono run --allow . \

--env-credential openai_api_key \

--env-credential-map 'op://Development/OpenAI/credential' OPENAI_API_KEY \

--env-credential-map 'apple-password://github.com/alice@example.com' GITHUB_PASSWORD \

-- my-agent

--env-credential-map so the

credential reference and destination variable are unambiguous.

Profile: Environment Credential Injection

{

"meta": { "name": "my-agent" },

"env_credentials": {

"apple-password://github.com/alice@example.com": "GITHUB_PASSWORD"

}

}

Profile: Proxy Credential Injection

{

"meta": { "name": "my-agent-secure" },

"network": {

"custom_credentials": {

"github_api": {

"upstream": "https://api.github.com",

"credential_key": "apple-password://github.com/alice@example.com",

"env_var": "GITHUB_PASSWORD",

"inject_header": "Authorization",

"credential_format": "token {}"

}

},

"credentials": ["github_api"]

}

}

Bitwarden Integration

nono supports Bitwarden entries via bw:// URIs in both --env-credential-map and profile credential fields (env_credentials, custom_credentials.credential_key). The Bitwarden CLI (bw) must be installed and authenticated.

URI format:

bw://<item-id>

bw://<item-id>/<field>

env_var explicitly when credential_key uses bw://:

{

"network": {

"custom_credentials": {

"openai": {

"upstream": "https://api.openai.com/v1",

"credential_key": "bw://12345678-1234-1234-1234-123456789abc/api-key",

"env_var": "OPENAI_API_KEY",

"inject_header": "Authorization",

"credential_format": "Bearer {}"

}

},

"credentials": ["openai"]

}

}

Custom Keyring Service

Use keyring://<service>/<account> when a credential lives in the system keyring under a service name other than nono:

{

"env_credentials": {

"keyring://my-service/openai_api_key": "OPENAI_API_KEY"

}

}

Environment Variables

When credential routes are configured, the proxy sets SDK-specific base URL environment variables:

| Route | Environment Variable | Value |

|---|

| openai | OPENAI_BASE_URL | http://127.0.0.1:<port>/openai |

| anthropic | ANTHROPIC_BASE_URL | http://127.0.0.1:<port>/anthropic |

Credential Route Configuration

The built-in network-policy.json defines default credential routes:

All services in this table can be used directly with --credential <service> (e.g. --credential github, --credential anthropic) without any custom credential definition in your profile.

Note: OpenAI’s upstream includes /v1 because the OpenAI SDK expects the base URL to include the version prefix. Anthropic’s SDK adds /v1/messages automatically, so its upstream is the root URL.

Using Credentials in Profiles

User profiles can specify which credential services to enable in the network section:

{

"meta": { "name": "my-agent" },

"filesystem": {

"allow": ["$WORKDIR"]

},

"network": {

"network_profile": "claude-code",

"credentials": ["openai", "anthropic"]

}

}

Custom Credential Definitions

For APIs not covered by the built-in services, you can define custom credentials in your profile. This lets you use --credential with any API while keeping credentials out of the sandbox.

{

"meta": { "name": "my-agent" },

"network": {

"network_profile": "minimal",

"credentials": ["openai", "telegram"],

"custom_credentials": {

"telegram": {

"upstream": "https://api.telegram.org",

"credential_key": "telegram_bot_token",

"inject_header": "Authorization",

"credential_format": "Bearer {}"

}

}

}

}

| Field | Required | Default | Description |

|---|

upstream | Yes | - | Upstream URL to proxy requests to (must be HTTPS, or HTTP for localhost only) |

credential_key | Yes | - | Keystore account name (alphanumeric and underscores only), op:// URI for 1Password, bw:// URI for Bitwarden, apple-password:// URI for Apple Passwords, keyring:// URI for a custom keyring service, file:// URI for file-backed secrets, or env:// URI for host environment variables (e.g., env://MY_TOKEN) |

inject_mode | No | header | Credential injection mode: header, url_path, query_param, or basic_auth |

inject_header | No | Authorization | HTTP header to inject the credential into (used with header and basic_auth modes) |

credential_format | No | Bearer {} | Format string for the credential value ({} is replaced with the credential) |

path_pattern | Conditional | - | Required for url_path mode. URL path pattern with {} placeholder (e.g., /bot{}/) |

path_replacement | No | - | Optional replacement pattern for url_path mode (e.g., /v2/bot{}/) |

query_param_name | Conditional | - | Required for query_param mode. Query parameter name for credential injection (e.g., key or api_key) |

proxy | No | - | Optional proxy overrides for phantom token parsing. Omitted fields inherit from top-level values. See Proxy-Side Overrides. |

env_var | Conditional | - | Explicit environment variable name for the phantom token. Required when credential_key is op://, bw://, apple-password://, or file://. Optional for env:// (derived from the URI) |

custom_credentials). The credential name is used to generate environment variables like TELEGRAM_BASE_URL. Shell variable names cannot contain hyphens, so my-api would create MY-API_BASE_URL which cannot be referenced as $MY-API_BASE_URL in shell scripts. Use my_api instead.

Injection Modes

Custom credentials support multiple injection patterns to accommodate different API authentication schemes:

Header Mode (default)

Injects the credential as an HTTP header with optional formatting. This is the most common authentication pattern.

{

"custom_credentials": {

"telegram": {

"upstream": "https://api.telegram.org",

"credential_key": "telegram_bot_token",

"inject_mode": "header",

"inject_header": "Authorization",

"credential_format": "Bearer {}"

}

}

}

URL Path Mode

Replaces a phantom token in the URL path with the real credential. Useful for APIs like Telegram Bot API that embed authentication tokens in the path (e.g., /bot{token}/method).

{

"custom_credentials": {

"telegram": {

"upstream": "https://api.telegram.org",

"credential_key": "telegram_bot_token",

"inject_mode": "url_path",

"path_pattern": "/bot{}/",

"path_replacement": "/bot{}/"

}

}

}

POST http://127.0.0.1:PORT/telegram/bot<NONO_PROXY_TOKEN>/sendMessage

POST https://api.telegram.org/bot<REAL_TOKEN>/sendMessage

Query Parameter Mode

Adds or replaces a query parameter with the credential value. Common for APIs that use URL query parameters for authentication (e.g., Google Maps API).

{

"custom_credentials": {

"google_maps": {

"upstream": "https://maps.googleapis.com",

"credential_key": "google_maps_api_key",

"inject_mode": "query_param",

"query_param_name": "key"

}

}

}

GET http://127.0.0.1:PORT/google_maps/maps/api/geocode/json?key=<NONO_PROXY_TOKEN>&address=...

GET https://maps.googleapis.com/maps/api/geocode/json?key=<REAL_API_KEY>&address=...

Basic Auth Mode

Injects a Base64-encoded Basic Authentication header. The credential value should be stored in username:password format in the keystore.

{

"custom_credentials": {

"private_api": {

"upstream": "https://api.example.com",

"credential_key": "example_basic_auth",

"inject_mode": "basic_auth"

}

}

}

username:password format:

# macOS

security add-generic-password -s "nono" -a "example_basic_auth" -w "myuser:mypassword"

# Linux

echo -n "myuser:mypassword" | secret-tool store --label="nono: example_basic_auth" \

service nono username example_basic_auth target default

Authorization: Basic <encoded>.

Proxy Overrides

By default, the same inject_mode and related fields control both how the proxy validates the incoming phantom token from the sandboxed client and how it injects the real credential into the outbound upstream request. For most APIs these are identical, but some clients require a different shape on the proxy side.

One such case is kubectl: it does not send a Bearer token to a non-HTTPS proxy, so the proxy needs to accept the phantom token as a query parameter while still injecting the real credential as a header upstream.

The optional proxy block lets you override any of the inbound-parsing settings independently:

{

"custom_credentials": {

"k8s": {

"upstream": "https://kubernetes.default.svc",

"credential_key": "k8s_token",

"inject_mode": "header",

"inject_header": "Authorization",

"credential_format": "Bearer {}",

"proxy": {

"inject_mode": "query_param",

"query_param_name": "token"

}

}

}

}

proxy block:

| Field | Description |

|---|

inject_mode | Override the injection mode used to parse inbound phantom tokens. |

inject_header | Override the header name for header / basic_auth mode parsing. |

credential_format | Override the format string check for header mode. |

path_pattern | Override the path pattern for url_path mode. |

path_replacement | Override the path replacement for url_path mode. |

query_param_name | Override the query parameter name for query_param mode. |

proxy block falls back to the corresponding top-level value. Outbound upstream credential injection always uses the top-level fields.

Phantom Token Validation

For url_path and query_param modes, the agent must include the session token (NONO_PROXY_TOKEN) as a placeholder in the request. The proxy validates this phantom token before replacing it with the real credential. Invalid or missing phantom tokens result in HTTP 401 Unauthorized responses.

Store the credential in the system keystore:

# macOS

security add-generic-password -s "nono" -a "telegram_bot_token" -w "your-bot-token"

# Linux

echo -n "your-bot-token" | secret-tool store --label="nono: telegram_bot_token" \

service nono username telegram_bot_token target default

nono run --profile my-agent --credential telegram -- my-bot

{

"network": {

"custom_credentials": {

"openai": {

"upstream": "https://my-openai-proxy.example.com/v1",

"credential_key": "my_openai_key",

"inject_header": "Authorization",

"credential_format": "Bearer {}"

}

}

}

}

Security Validation

Custom credentials are validated at startup:

- Upstream URL must be HTTPS (HTTP is only allowed for

localhost, 127.0.0.1, or ::1)

- Credential key must be alphanumeric (letters, numbers, and underscores only) unless it uses a supported URI scheme such as

op://, bw://, apple-password://, keyring://, file://, or env://

Invalid configurations will fail with a clear error message before the sandbox is applied.

Session Token Authentication

Reverse proxy requests are authenticated using the session token. The proxy generates a unique 256-bit token per session and passes it to the child via the NONO_PROXY_TOKEN environment variable.

For credential routes, the sandboxed client must present that token in the configured inbound credential location: the configured header, URL path placeholder, or query parameter. With the default header mode, this is the credential header itself, such as Authorization for OpenAI or x-api-key for Anthropic. Requests without a valid phantom token are rejected with 401 Unauthorized.

For no-credential reverse proxy routes, authentication uses proxy auth and invalid or missing credentials are rejected with 407 Proxy Authentication Required.

This prevents other localhost processes from accessing the credential injection routes.

Endpoint Filtering

Credential routes can be restricted to specific HTTP method+path combinations using --allow-endpoint or endpoint_rules in custom credential definitions. See Networking — Endpoint Filtering for full documentation including pattern syntax.

WSL2 Limitations

On WSL2, proxy-based credential injection (--credential) is blocked by default. The proxy itself works, but the network lockdown that prevents the child from bypassing the proxy cannot be kernel-enforced — WSL2’s seccomp notify conflict (microsoft/WSL#9548) blocks the fallback, and Landlock V4 (kernel 6.7+) is not yet available.

Environment variable injection (--env-credential) works normally on WSL2 — it does not depend on the proxy.

To opt in to proxy mode without network enforcement, set wsl2_proxy_policy: "insecure_proxy" in your profile’s security config. See Credential Proxy on WSL2 for details.

Go CLI tools (gh, terraform) on macOS ignore SSL_CERT_FILE and verify TLS exclusively via the system trust store (com.apple.trustd). By default, they reject nono’s proxy-minted certificates with x509: certificate is not trusted.

To fix this, add --trust-proxy-ca which persists the proxy CA in macOS Keychain and adds it to the user trust store:

nono run --allow-cwd --credential github --trust-proxy-ca -- gh api /user

--proxy-ca-validity).

See --trust-proxy-ca for details.

Security Properties

- Credentials never enter the sandbox - The agent process has no access to API keys, even through environment variables or memory

- Session token isolation - Credential reverse proxy routes validate the phantom token in the configured header, path, or query parameter; no-credential reverse routes and CONNECT tunnels use proxy auth

- Managed secret sources - Credentials can be loaded from the OS keyring, 1Password, Bitwarden, Apple Passwords, explicit files, or host environment variables

- Zeroized in memory - Credential values are stored in

Zeroizing<String> and wiped from memory on drop

- Session-scoped - Credentials are loaded once at proxy startup and never written to disk or logged

- Header stripping - Credential routes strip the configured credential header before injecting the real credential, along with hop-by-hop headers. No-credential reverse routes pass application headers such as

Authorization through.

Audit Logging

Reverse proxy requests are logged with the service name and status code, but credential values are never logged:

ALLOW REVERSE openai POST /v1/chat/completions -> 200

ALLOW REVERSE anthropic POST /v1/messages -> 200

Environment Variable Injection

For credentials that don’t need proxy-based protection (e.g., database URLs, custom tokens), you can load secrets from the system keystore and inject them as environment variables.

Quick Start

# 1. Store a secret in the system keystore

security add-generic-password -s "nono" -a "openai_api_key" -w "sk-..." # macOS

# 2. Run with env-credential injection

nono run --allow-cwd --env-credential openai_api_key -- my-agent

$OPENAI_API_KEY (uppercased account name).

How It Works

1. nono loads secrets from keystore BEFORE sandbox is applied

2. Sandbox is applied (blocks keystore access)

3. Secrets injected as environment variables

4. Command executed with secrets available

5. Secrets zeroized from memory after exec()

Storing Secrets

All nono secrets are stored under the service name nono in the system keystore.

macOS Keychain

# Interactive (prompts for password)

security add-generic-password -s "nono" -a "openai_api_key" -w

# Non-interactive (password on command line - less secure)

security add-generic-password -s "nono" -a "openai_api_key" -w "sk-..."

# Update an existing secret

security add-generic-password -s "nono" -a "openai_api_key" -w "new-value" -U

# Delete a secret

security delete-generic-password -s "nono" -a "openai_api_key"

# List all nono secrets

security dump-keychain | grep -A5 "nono"

- Open Keychain Access (search in Spotlight or find in

/Applications/Utilities/)

- Select the login keychain in the sidebar

- Click File > New Password Item (or press

Cmd+N)

- Fill in: Keychain Item Name:

nono, Account Name: openai_api_key, Password: your API key

- Click Add

When nono accesses a secret for the first time, macOS will prompt you to allow access. Click Always Allow to avoid repeated prompts.

Linux Secret Service

Linux uses the Secret Service API, typically provided by GNOME Keyring or KWallet. You need secret-tool (part of libsecret-tools) and a running keyring daemon.

Installation:

sudo apt install libsecret-tools gnome-keyring # Debian/Ubuntu

sudo dnf install libsecret gnome-keyring # Fedora

sudo pacman -S libsecret gnome-keyring # Arch Linux

# Store (prompts for password)

secret-tool store --label="nono: openai_api_key" service nono username openai_api_key target default

# Store non-interactively

echo -n "sk-..." | secret-tool store --label="nono: openai_api_key" service nono username openai_api_key target default

# Retrieve (for testing)

secret-tool lookup service nono username openai_api_key target default

# Delete

secret-tool clear service nono username openai_api_key target default

# List all nono secrets

secret-tool search --all service nono

target default attribute is required for the keyring crate to find the entry.

Using env-credential

CLI Flag

Specify comma-separated account names to load:

# Load single secret

nono run --allow-cwd --env-credential openai_api_key -- claude

# Load multiple secrets

nono run --allow-cwd --env-credential openai_api_key,anthropic_api_key -- claude

| Account Name | Environment Variable |

|---|

openai_api_key | OPENAI_API_KEY |

anthropic_api_key | ANTHROPIC_API_KEY |

github_token | GITHUB_TOKEN |

Profile-Based Secrets

Profiles can declare which credentials to load in the env_credentials section (the previous name secrets is still accepted):

{

"meta": { "name": "my-agent" },

"filesystem": {

"allow": ["$WORKDIR"]

},

"env_credentials": {

"openai_api_key": "OPENAI_API_KEY",

"anthropic_api_key": "ANTHROPIC_API_KEY",

"custom_token": "MY_CUSTOM_TOKEN"

}

}

nono run --profile my-agent -- my-agent

env_credentials section maps keystore account names to environment variable names, giving you full control over naming.

Error Handling

Secret not found:

nono: Secret not found in keystore: openai_api_key

Keystore access failed for 'openai_api_key': ...

Please unlock your keystore and press Enter to retry (or Ctrl+C to abort):

nono: Failed to access system keystore: Multiple entries (2) found for 'api_key' - please resolve manually

Headless Linux Environments

Secret Service (GNOME Keyring) can be problematic on headless servers and SSH sessions because it requires D-Bus and a graphical login.

Option 1: Use pass (Recommended for Headless)

pass uses GPG encryption and works well in headless environments.

# Install and initialize

sudo apt install pass

gpg --gen-key

pass init "your-gpg-key-id"

# Store a secret

pass insert nono/openai_api_key

#!/bin/bash

# ~/bin/nono-with-pass

export OPENAI_API_KEY=$(pass show nono/openai_api_key)

export ANTHROPIC_API_KEY=$(pass show nono/anthropic_api_key)

exec nono run "$@"

Option 2: Environment Variables via Wrapper

For simple setups, export secrets from a protected file:

mkdir -p ~/.config/nono

touch ~/.config/nono/secrets.env

chmod 600 ~/.config/nono/secrets.env

cat > ~/.config/nono/secrets.env << 'EOF'

export OPENAI_API_KEY="sk-..."

export ANTHROPIC_API_KEY="sk-ant-..."

EOF

#!/bin/bash

# ~/bin/nono-env

source ~/.config/nono/secrets.env

exec nono run "$@"

File-based secrets are less secure than a proper keystore. Ensure the file has strict permissions (chmod 600) and is not backed up to insecure locations.

Option 3: Set Up Headless Keyring

If you prefer to use Secret Service in headless mode:

#!/bin/bash

# unlock-keyring.sh - Run once per session

read -s -p "Keyring password: " KEYRING_PASSWORD

echo

if [ -z "$DBUS_SESSION_BUS_ADDRESS" ]; then

eval $(dbus-launch --sh-syntax)

export DBUS_SESSION_BUS_ADDRESS

fi

echo -n "$KEYRING_PASSWORD" | gnome-keyring-daemon --unlock --components=secrets

unset KEYRING_PASSWORD

~/.bashrc or ~/.zshrc for SSH sessions:

if [ -n "$SSH_CONNECTION" ] && [ -z "$DBUS_SESSION_BUS_ADDRESS" ]; then

export $(dbus-launch)

fi

Security Considerations

What nono protects:

- Keystore file access - Sandbox blocks direct access to

~/Library/Keychains (macOS) and keyring files

- Memory exposure - Secrets wrapped in

Zeroizing<String> and cleared after use

- Environment variable filtering - Injected credentials bypass the

environment.allow_vars allow-list, so they always reach the child process even when other variables are filtered. See Environment Variable Filtering.

Limitations:

- Environment variable visibility - On Linux,

/proc/PID/environ is readable by same-user processes. For maximum protection, use proxy injection instead.

- Malicious use of credentials - nono cannot prevent a sandboxed process from misusing legitimately obtained credentials

Best practices:

- Use unique account names (e.g.,

myapp_openai_key rather than api_key)

- Rotate secrets regularly

- Only grant secrets that are actually needed

- Prefer proxy injection for LLM API keys

Next Steps